$750K in Seed Grants Awarded by UMD-Led Coalition on Trustworthy AI

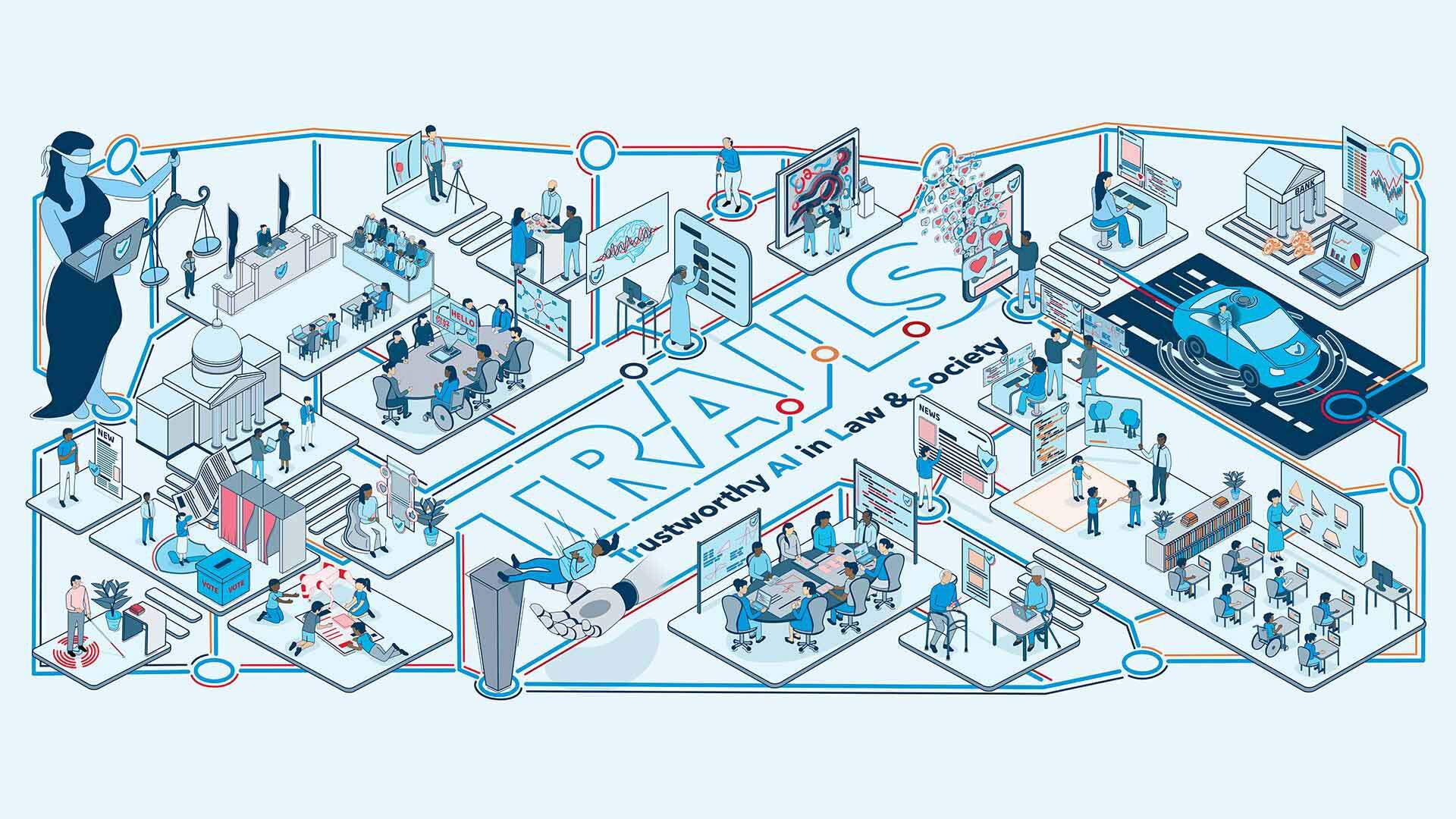

The Institute for Trustworthy AI in Law & Society (TRAILS), an innovative coalition led by the University of Maryland (UMD), has awarded over $750,000 in seed funding to seven research teams dedicated to advancing the science and practice of trustworthy artificial intelligence. Comprising researchers and students from UMD, George Washington University, Morgan State University, and Cornell University, these teams will pursue projects exploring how ethical frameworks, public engagement, and rigorous governance can foster trust in artificial intelligence technologies.

TRAILS was established in May 2023, catalyzed by a $20 million grant from the National Science Foundation (NSF) and the National Institute of Standards and Technology (NIST). This ambitious initiative is focused on developing participatory research models that prioritize human rights, societal benefit, and the responsible deployment of artificial intelligence in real-world scenarios. In a time of rapid AI advancement and growing public scrutiny, TRAILS’ mission resonates strongly with policymakers, academics, and industry leaders calling for accountable and transparent AI development.

Revamping AI Through Interdisciplinary Collaboration

The newly awarded seed grants, each ranging from $50,000 to $150,000, reflect the coalition’s strategic emphasis on research that bridges technical innovation with societal values. These projects are aligned with TRAILS’ four core research thrusts: participatory AI design, development of robust AI methods, sense-making for users and stakeholders, and inclusive governance structures.

According to Dr. Hal Daumé III, director of TRAILS and a renowned professor of computer science at UMD, “As we continue to expand our impact and outreach, we’re aware of the need to align our technological expertise—which is quite robust—with new methodologies we’re developing that can help people and organizations realize the full potential of AI. If people don’t see their concerns and values reflected in AI, they’re unlikely to trust or use it.”

This round marks the third cycle of TRAILS seed funding, and the selected projects are expected not only to yield immediate research outputs but to lay the groundwork for further external funding, long-term partnerships, and national policy contributions. Notably, a previous TRAILS-funded project led to a $4.5 million grant from the Gates Foundation and Walton Family Foundation, illustrating the program’s capacity to accelerate impactful research.

Key Grant Projects: Shaping the Future of Trustworthy AI

Among the seven innovative projects selected for this round of funding are:

- Open-Source LLM Auditing Framework: A team from GW, UMD, and Morgan State is creating an open-source framework for auditing large language models (LLMs) like ChatGPT. By empowering organizations and individuals to test AI for context- and user-specific trustworthiness, the project aims to democratize high-stakes AI evaluation. This work builds on the recent surge of open-source AI assessment tools and is particularly relevant as major tech firms and regulators grapple with risks posed by generative AI in fields like cybersecurity and healthcare.

- AI Literacy for Youth and Families: Researchers are undertaking studies to understand and address the technical and instructional needs of young people and their families as they interact with AI. The project will assess community perceptions of AI literacy, identify barriers to effective AI education, and map out the required infrastructure for sustainable learning environments. Recent surveys indicate that less than 20% of high school students in the U.S. feel adequately prepared to use AI tools responsibly, underscoring the urgent need for initiatives like this one.

- Evaluating AI in Disaster Recovery: Leveraging real-world data from the 2025 Los Angeles wildfires, this project examines the reliability of LLMs in synthesizing public safety information during emergencies. It seeks to understand how disaster survivors and responders evaluate AI-generated updates versus traditional media and government channels. In the context of intensifying natural disasters and misinformation threats, the project’s outcomes may inform federal guidelines on AI in emergency management.

- Red Teaming for Generative AI Safety: Inspired by the growing practice of crowdsourced security testing, the UMD-led team will organize competition-style events in which diverse participants identify, report, and analyze vulnerabilities and biases in state-of-the-art AI models. The findings are expected to contribute valuable data on how experienced and novice users build (or lose) trust in generative AI systems over time.

- Systemic AI Governance: A GW-based project aims to map out multi-layered AI governance ecosystems, drawing lessons from mature fields like aviation and finance. By designing frameworks that clarify stakeholder roles and responsibilities, the project responds directly to calls from the White House’s 2023 Executive Order on Safe, Secure, and Trustworthy Artificial Intelligence for more granular, risk-based oversight.

- Copyright and AI Creativity: Cornell and GW researchers will carry out pioneering experiments on how jurors and AI users perceive “substantial similarity” in copyright cases involving AI-generated works. As landmark legal battles—such as those between copyright holders and major AI companies—shape the future of digital authorship, this research could influence both case law and industry best practices.

- Multimodal AI Question Answering: A UMD-led team is developing challenging, real-world multimodal QA datasets to benchmark human versus AI abilities. By exposing and analyzing current weaknesses in leading models’ performance on tasks such as visual question answering, the project hopes to accelerate trustworthy AI development in consumer-facing applications like search, accessibility, and education technology.

Combined, these projects not only address technical limitations but actively further the conversation about the ethical, social, and legal implications of widespread AI adoption.

Building a Foundation for Responsible AI

The announcement of these seed grants comes amid mounting calls from the public and regulatory bodies for greater transparency and accountability in the deployment of AI systems. In 2024, the European Union passed the AI Act, considered the world’s first comprehensive AI regulation, while the U.S. has introduced multiple legislative proposals concerning AI transparency, safety standards, and bias mitigation. Academic-led initiatives like TRAILS are increasingly recognized as vital connectors between scientific innovation, public service, and regulatory frameworks.

Dr. David Broniatowski, deputy director of TRAILS and professor at George Washington University, highlighted, “We’re investing in the future of AI with this latest cohort of seed projects. These ambitious endeavors are strategically aligned, impact-driven initiatives that will advance the science underlying AI adoption, shaping future conversations on AI governance and trust.”

Over the next year, grant recipients will launch pilot studies, develop educational resources, and release open-source tools designed to serve stakeholders from K-12 educators to government policymakers. Their progress will be closely followed not only by the academic community but also by technology firms, policymakers, and civil society organizations seeking best practices for safe and beneficial AI applications.

Conclusion: A National Model for AI Innovation and Trust

As AI technologies play an increasing role in personal, professional, and civic life, the work of TRAILS and its partner institutions exemplifies the potential for universities to lead in responsible innovation. By integrating multi-disciplinary expertise and centering the needs and rights of diverse communities, these efforts promise to shape a future in which AI is both powerful and principled—a vision that is more critical now than ever.